How to Dub Videos Naturally in 2026: Fixing AI Voice Cloning Artifacts

A comprehensive guide to solving common dubbing challenges with AI tools. Focus on pain points like robotic pacing, lack of emotion, and lip-sync issues.

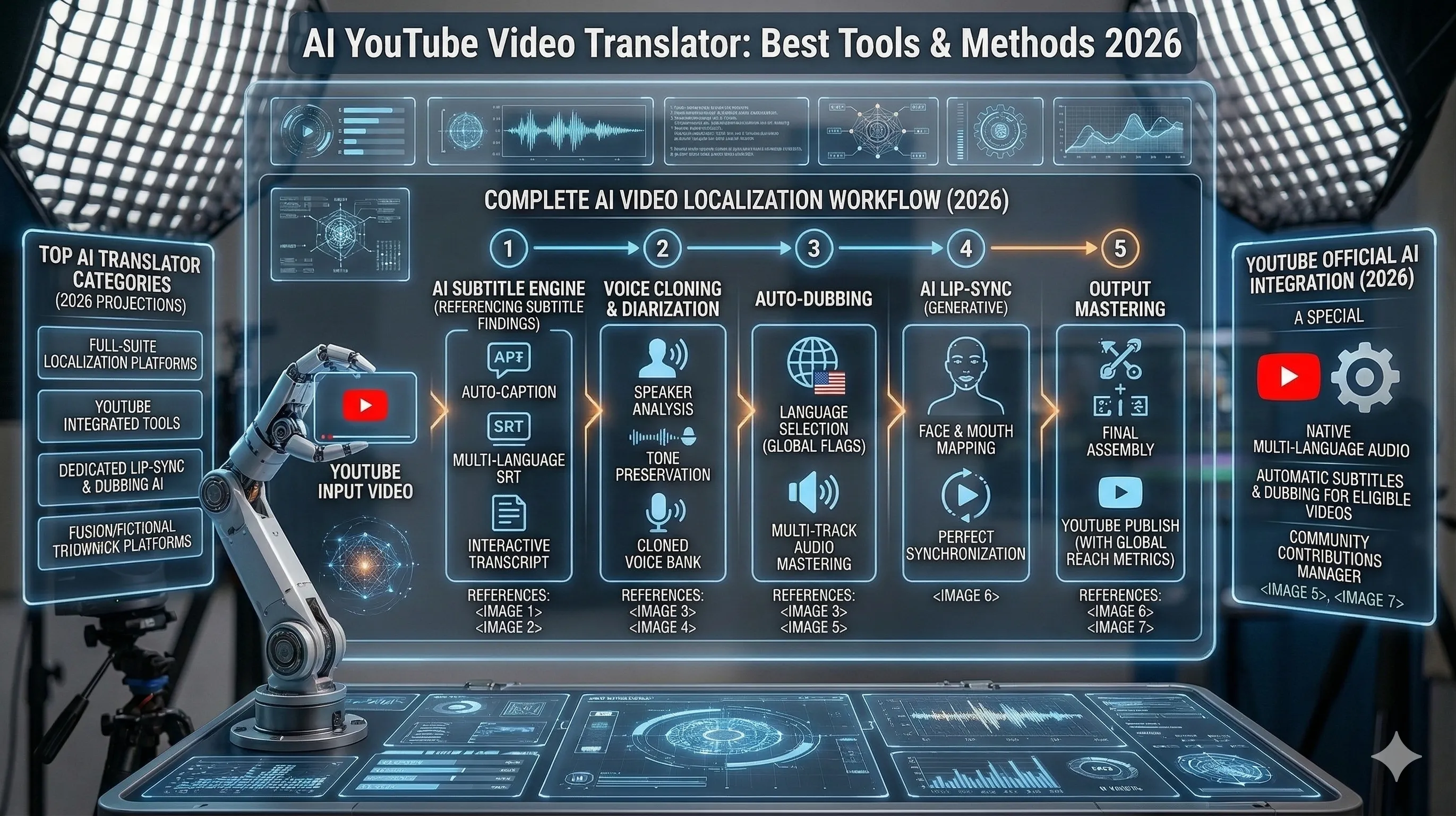

AI video dubbing has revolutionized content creation, but robotic artifacts and unnatural pacing still plague many productions. In 2026, we have better tools and techniques to overcome these challenges. The core issue lies in how most dubbing pipelines treat speech as a purely technical layer rather than a performance. Many systems still generate audio with flat prosody, inconsistent emphasis, and poorly timed pauses, which breaks immersion even when the voice itself sounds realistic. Viewers are highly sensitive to timing mismatches—when emotional beats, micro-pauses, or sentence stress don’t align with the visual performance, the result feels subtly “off,” even if they can’t articulate why. Modern approaches address this by focusing on prosody control and temporal alignment. Instead of generating speech linearly, newer models incorporate rhythm-aware synthesis, allowing creators to control pacing at the phrase and syllable level. This makes it possible to match lip movements, preserve dramatic pauses, and maintain the original actor’s intent across languages. Techniques like forced alignment, phoneme-level timing, and reference-audio conditioning are now becoming standard in high-quality pipelines. Another major improvement comes from context-aware voice modeling. Rather than generating each line in isolation, advanced systems maintain conversational memory—tracking tone, emotional state, and speaker dynamics across scenes. This reduces tonal drift and ensures that a character sounds consistent whether they’re whispering, arguing, or delivering exposition. For narrative-heavy content, this shift alone dramatically improves perceived realism. Finally, the rise of human-in-the-loop workflows has closed the gap between automation and quality. Creators now combine AI generation with lightweight editing layers—fine-tuning pauses, adjusting emphasis, or regenerating specific segments instead of entire clips. This hybrid approach balances efficiency with creative control, enabling production teams to scale dubbing while still achieving studio-level results. Together, these advances move AI dubbing from a convenience tool to a production-grade solution, capable of delivering natural, emotionally resonant performances across languages without sacrificing speed or scalability.

This guide will show you how to fix common dubbing problems using cutting-edge AI tools like MuseTalk, Emotion TTS, and advanced post-processing techniques. We go beyond basic voice generation to address the most persistent failure points in AI dubbing workflows—lip-sync drift, monotone delivery, timing mismatches, and emotional flatness. You’ll learn how to use MuseTalk for precise visual-audio alignment, ensuring that generated speech tracks closely match mouth movements and facial expressions, even in fast-paced or dialogue-heavy scenes. On the audio side, we break down how to leverage Emotion TTS systems to inject controlled expressiveness into generated voices. Instead of relying on generic presets, the guide walks through how to tune pitch contours, pacing, and emphasis to reflect intent—whether it’s tension, sarcasm, or subtle emotional shifts within a single line. This allows you to move from “technically correct” audio to performances that feel human and contextually grounded. We also cover advanced post-processing workflows that make a critical difference in final output quality. This includes phoneme-level timing adjustments, silence trimming and extension, breath and pause insertion, and audio mastering techniques such as EQ matching and loudness normalization to blend dubbed voices seamlessly into the original soundtrack. By combining these tools and techniques into a cohesive pipeline, you’ll be able to systematically diagnose and fix dubbing issues rather than relying on trial and error—turning inconsistent AI outputs into polished, production-ready dialogue.

Pro Tip

Common AI Dubbing Problems

🤖 Robotic Pacing

AI-generated speech often lacks natural rhythm and timing, making it sound mechanical and detached.

Viewer Disengagement

Unnatural pacing breaks immersion and reduces viewer retention by up to 40%.

😐 Lack of Emotional Nuance

AI voices struggle to convey subtle emotions, making dramatic scenes fall flat.

Emotional Disconnect

Missing emotional cues prevent viewers from connecting with characters and story.

👄 Lip-Sync Mismatches

Poor alignment between audio and visual lip movements creates an uncanny valley effect.

Unrealistic Appearance

Visible lip-sync errors immediately break the illusion of natural speech.

Transform Your Video Dubbing with AI

By combining these advanced techniques and tools, you can create natural, emotionally engaging dubbed content that captivates audiences. The future of AI dubbing is here, and it's more human than ever.