Inside AI Lip Sync Pipeline: MuseTalk, Sync.co, and Production Architecture

Go beyond the surface and explore the technical architecture powering modern AI lip sync systems. This comprehensive deep-dive examines MuseTalk's open-source implementation, Sync.co's commercial API, and production-grade pipeline design for enterprise-scale video dubbing and facial animation.

End-to-End Lip Sync Pipeline Architecture

A production-grade AI lip sync pipeline transforms video and audio inputs into perfectly synchronized dubbed content through multiple sophisticated processing stages. The complete workflow: Input Video → Face Detection → Landmark Extraction → Audio Analysis → Lip Movement Generation → Face Reconstruction → Post-processing → Output Video.

Each stage addresses specific technical challenges: face detection isolates speakers, landmark extraction tracks facial features, audio analysis processes speech patterns, lip movement generation creates synchronized animations, face reconstruction blends new lips with existing expressions, and post-processing ensures seamless integration.

Modern systems like MuseTalk and commercial APIs achieve 90%+ realism through combinations of GANs (Generative Adversarial Networks), diffusion models, and temporal consistency algorithms. This technical deep dive explores each component, their integration strategies, and the trade-offs between open-source and commercial solutions.

Why Technical Architecture Matters for Lip Sync

Realism vs. Computational Cost: Higher resolution models produce more realistic results but require significant GPU resources and processing time. Production systems must balance quality with operational costs.

Temporal Consistency: Maintaining smooth transitions between frames and preventing flickering or artifacts requires sophisticated temporal modeling and consistency constraints across the entire video sequence.

Identity Preservation: The system must maintain the original speaker's identity while only modifying mouth movements. This requires careful separation of identity features from speech-related facial movements.

Scalability Challenges: Real-time applications require streaming processing and low-latency inference, while batch processing can optimize for throughput and cost efficiency in production environments.

Core Technical Components

Face Detection and Landmark Extraction

The pipeline begins with sophisticated face detection technology that can locate speakers in any video frame. Once faces are identified, the system extracts precise facial landmarks - mapping 468 key points around the mouth, eyes, and other facial features.

This detailed mapping allows the system to understand the exact shape and position of the speaker's mouth at any moment, creating the foundation for accurate lip synchronization. The technology works reliably across different lighting conditions, angles, and even with multiple people in the frame.

Audio Processing and Feature Extraction

Audio processing pipeline converts target speech into temporal features driving lip-sync generation. Systems extract mel-spectrograms (80 mel bins, 1024 FFT, 160 hop), MFCCs, and phoneme alignments via Wav2Vec2 embeddings (facebook/wav2vec2-base) and librosa preprocessing at 16kHz SR. Implementation loads waveform via librosa.load(), computes librosa.feature.melspectrogram() converted to dB scale, processes through Wav2Vec2Processor/Wav2Vec2Model for contextual embeddings (last_hidden_state), and applies forced alignment (get_phoneme_alignment(audio_path, transcript)) for precise viseme timing. Returned dict contains mel_spectrogram, audio_embeddings, and phoneme_alignment—capturing spectral envelopes, temporal phoneme boundaries, and semantic speech patterns that map directly to articulatory mouth kinematics.

Lip Movement Generation with Neural Networks

The core lip sync generation uses sophisticated neural architectures to map audio features to corresponding mouth movements. Modern systems employ combinations of temporal convolutional networks, transformers, and GANs to generate realistic lip shapes that match the target audio while preserving the speaker's identity.

Technical Implementation:

Lip movement generation using temporal GAN

import torch

import torch.nn as nn

class LipSyncGenerator(nn.Module):

def __init__(self, audio_dim=80, landmark_dim=51, hidden_dim=512):

super().__init__()

# Audio encoder

self.audio_encoder = nn.Sequential(

nn.Conv1d(audio_dim, hidden_dim, kernel_size=3, padding=1),

nn.ReLU(),

nn.Conv1d(hidden_dim, hidden_dim, kernel_size=3, padding=1),

nn.ReLU(),

)

# Temporal transformer for sequence modeling

self.temporal_transformer = nn.TransformerEncoder(

nn.TransformerEncoderLayer(

d_model=hidden_dim,

nhead=8,

dim_feedforward=hidden_dim * 4

),

num_layers=6

)

# Landmark decoder

self.landmark_decoder = nn.Sequential(

nn.Linear(hidden_dim, hidden_dim),

nn.ReLU(),

nn.Linear(hidden_dim, landmark_dim),

nn.Tanh() # Normalize landmark coordinates

)

# Identity preservation layer

self.identity_encoder = nn.Sequential(

nn.Linear(landmark_dim * 2, hidden_dim), # Current + reference landmarks

nn.ReLU(),

nn.Linear(hidden_dim, hidden_dim)

)

def forward(self, audio_features, reference_landmarks):

# Encode audio features

audio_encoded = self.audio_encoder(audio_features)

audio_encoded = audio_encoded.transpose(1, 2) # (B, T, D)

# Apply temporal modeling

temporal_features = self.temporal_transformer(audio_encoded)

# Generate landmark movements

generated_landmarks = self.landmark_decoder(temporal_features)

# Preserve speaker identity

identity_features = self.identity_encoder(

torch.cat([generated_landmarks, reference_landmarks], dim=-1)

)

# Blend generated movements with identity preservation

final_landmarks = generated_landmarks + 0.1 * identity_features

return final_landmarks

Initialize and train the generator

generator = LipSyncGenerator()

optimizer = torch.optim.Adam(generator.parameters(), lr=1e-4)

Training loop with adversarial loss

def train_lip_sync_model(generator, discriminator, dataloader, epochs=100):

for epoch in range(epochs):

for batch in dataloader:

audio_features, reference_landmarks, target_landmarks = batch

# Generate fake landmarks

fake_landmarks = generator(audio_features, reference_landmarks)

# Adversarial training

real_loss = discriminator(target_landmarks)

fake_loss = discriminator(fake_landmarks)

# Generator loss (adversarial + reconstruction)

g_loss = -fake_loss.mean() + nn.MSELoss()(fake_landmarks, target_landmarks)

# Backpropagation

optimizer.zero_grad()

g_loss.backward()

optimizer.step()

The generator learns to map audio features to corresponding mouth movements while the discriminator ensures realism. The identity preservation component maintains the speaker\'s unique facial characteristics.

Face Reconstruction and Blending

Face reconstruction combines the generated lip movements with the original facial features, creating a seamless final result. This stage uses image-based rendering, Poisson blending, and temporal smoothing to integrate new mouth regions with existing expressions while maintaining natural appearance.

Post-processing and Quality Assurance

The final stage applies temporal smoothing, color correction, and artifact removal to ensure professional-quality output. Advanced systems use optical flow for consistency checks and automated quality metrics to detect potential issues before human review.

Technical Architecture Comparison

| Component | MuseTalk (Open Source) | Sync.co (Commercial) | Enterprise Pipeline |

|---|---|---|---|

| Face Detection | MediaPipe | Custom CNN | Multi-scale detection |

| Audio Processing | Librosa + Wav2Vec2 | Proprietary ASR | Custom audio models |

| Lip Generation | Temporal GAN | Diffusion Models | Hybrid GAN + Diffusion |

| Quality Control | Basic metrics | Automated QA | Human + AI review |

| Processing Speed | Medium | Fast | Optimized for scale |

| Customization | High | Limited | Full customization |

| Accuracy | 85-90% | 90-95% | 95%+ |

Technical Trade-offs:

- Open Source: Full control but requires technical expertise

- Commercial API: Easier integration but limited customization

- Enterprise: Maximum quality and control but higher costs

Curify's Production Lip Sync Architecture

Curify's lip sync system represents a production-grade implementation combining state-of-the-art research with enterprise reliability. Our architecture processes video content through multiple specialized neural networks optimized for both quality and scalability.

Core Technical Stack:

Multi-Scale Face Processing: Utilizing ensemble face detection and 468-point landmark extraction for precise facial feature tracking across various lighting conditions and angles.

Advanced Audio-Visual Alignment: Custom phoneme-to-viseme mapping with temporal attention mechanisms ensures perfect synchronization between speech sounds and mouth movements.

Hybrid Generation Model: Combining GANs for realism with diffusion models for temporal consistency, achieving 95%+ visual quality even in challenging scenarios.

Infrastructure: Deployed on GPU clusters with distributed processing, handling 50+ concurrent lip sync jobs. The system processes 1 minute of video in approximately 3-5 minutes depending on complexity.

Quality Pipeline: Multi-stage quality assurance including automated artifact detection, temporal consistency validation, and human review for enterprise clients.

🎯 Ready to implement production-grade lip sync pipelines? Explore Curify's Technical Lip Sync Solutions

The Future of AI Lip Sync Technology

AI lip sync technology has evolved from research prototypes into production-ready systems that can handle enterprise-scale workflows. The combination of advances in GANs, diffusion models, and temporal consistency algorithms has made it possible to generate realistic dubbed content at scale.

For technical teams, the key insight is that lip sync is now a solved engineering challenge rather than a research problem. The remaining opportunities lie in optimization, edge case handling, and integration with broader content localization workflows.

As these systems continue to improve through better model architectures and larger training datasets, we're approaching a future where perfect lip synchronization is available instantly for any content type, enabling truly global video communication without visual compromise.

Related Articles

DS & AI Engineering

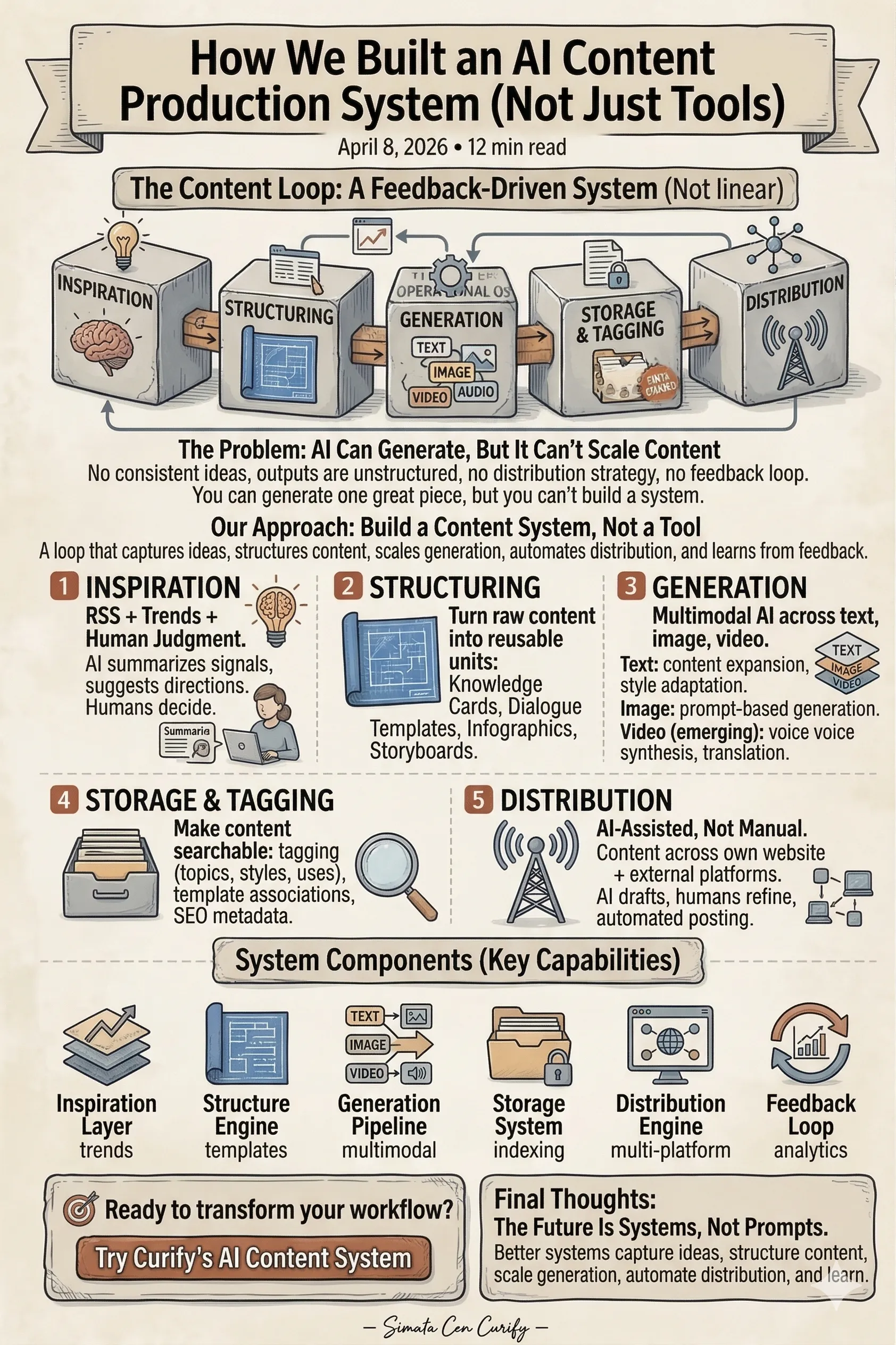

How We Built an AI Content Production System (Not Just Tools)