Deep Dive: Transcription Technical Overview

Go beyond basic transcription tools and discover the technical architecture powering modern video transcription systems. This comprehensive deep-dive breaks down Curify's complete pipeline—from audio separation and speech recognition to forced alignment and speaker diarization—showing how AI transforms raw video into perfectly timed, accurate transcripts at enterprise scale.

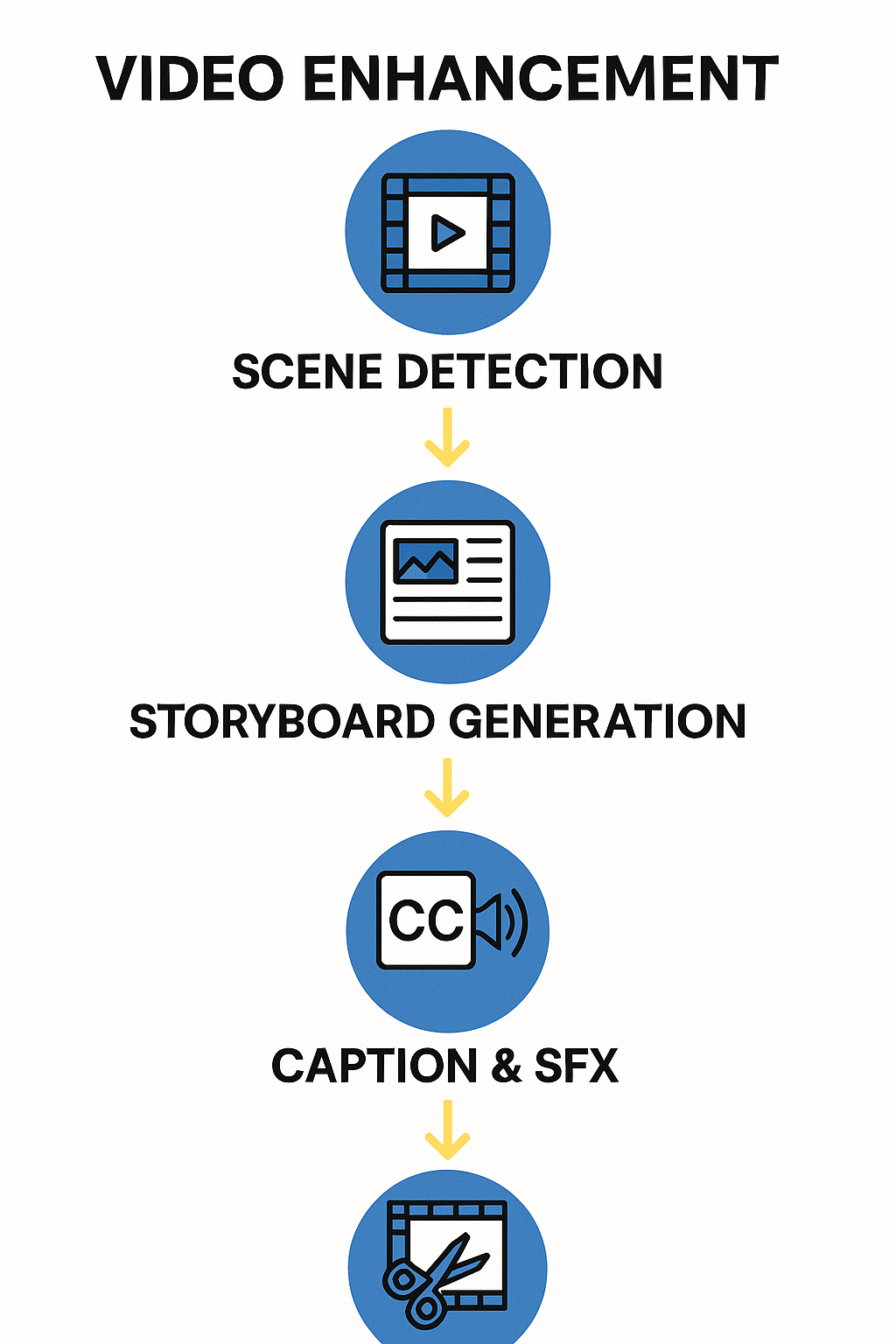

End-to-End Video Transcription Pipeline

A production-grade video transcription pipeline transforms raw video content into structured, time-synced text through multiple sophisticated processing stages. The complete workflow: Video → Audio extraction → Segmentation → ASR (Automatic Speech Recognition) → Alignment → Speaker Diarization → Post-processing.

Each stage addresses specific technical challenges: audio extraction isolates the speech track, segmentation breaks long audio into manageable chunks, ASR converts speech to text, alignment provides word-level timestamps, diarization identifies who spoke when, and post-processing cleans and formats the final output.

Modern systems like Curify's pipeline achieve 95%+ accuracy even in challenging conditions by combining Conv-TasNet for audio separation, WhisperX for speech recognition, and advanced clustering algorithms for speaker identification. This technical deep dive explores each component and their integration strategies.

Why Technical Architecture Matters for Transcription

Accuracy vs. Speed Tradeoffs: Larger models provide better accuracy but increase processing time and computational costs. Production systems must balance these factors based on use case requirements.

Scalability Challenges: Real-time transcription requires streaming processing and low-latency inference, while batch processing can optimize for throughput and cost efficiency.

Multilingual Complexity: Code-switching (mixing languages within sentences) and cross-lingual content require specialized models that can maintain context across language boundaries.

Production Reliability: Enterprise systems need failover mechanisms, quality monitoring, and automated error recovery to handle the edge cases that inevitably occur with real-world content.

Core Pipeline Components

Audio Preprocessing and Segmentation

The pipeline begins with sophisticated audio preprocessing using Conv-TasNet for source separation and audio segmentation. This stage isolates human speech from background music, ambient noise, and other audio sources through time-domain audio separation.

Technical Implementation:

# Audio separation using Conv-TasNet

import torch

import torchaudio

from conv_tasnet import ConvTasNet

# Initialize the source separation model

separator = ConvTasNet(

n_bases=512, # Number of basis functions

kernel_size=16, # Convolution kernel size

stride=8, # Stride for temporal convolutions

n_layers=8, # Number of convolutional layers

n_src=2 # Number of sources to separate

)

# Process audio waveform at 16kHz

audio_tensor, sample_rate = torchaudio.load('input_video.wav')

if sample_rate != 16000:

resampler = torchaudio.transforms.Resample(sample_rate, 16000)

audio_tensor = resampler(audio_tensor)

# Separate sources

with torch.no_grad():

separated_sources = separator(audio_tensor)

speech_source = separated_sources[0] # Extract primary speech

The Conv-TasNet architecture uses convolutional encoding-decoding structures with temporal convolutional networks to separate audio sources directly from raw waveforms, avoiding information loss associated with spectrogram-based approaches.

ASR (Speech-to-Text) with WhisperX

Clean speech feeds into WhisperX, an enhanced version of OpenAI's Whisper model optimized for transcription accuracy and speed. The system handles multiple speakers, dialects, and accents through speaker diarization—automatically segmenting audio by speaker identity.

Technical Implementation:

# Advanced speech recognition using WhisperX

import whisperx

import torch

from whisperx.utils import get_writer

# Load WhisperX model for transcription

model = whisperx.load_model("large-v3", device="cuda")

# Perform transcription with word-level timestamps and speaker diarization

result = model.transcribe(

speech_source,

batch_size=32, # Process in batches for efficiency

language="auto", # Auto-detect language

task="transcribe",

word_timestamps=True, # Enable word-level timing

print_progress=True

)

# Align whisper output using forced alignment

model_a, metadata = whisperx.load_align_model(

language_code=result["language"],

device="cuda"

)

result = whisperx.align(

result["segments"],

model_a,

metadata,

speech_source,

device="cuda"

)

# Assign speaker labels

diarize_model = whisperx.DiarizationPipeline(

use_auth_token=False,

device="cuda"

)

diarize_segments = diarize_model(speech_source)

result = whisperx.assign_word_speakers(diarize_segments, result)

WhisperX improves upon the base Whisper model through optimized inference, better speaker diarization, and enhanced word-level timing accuracy. The forced alignment step ensures precise synchronization between audio and transcript.

Forced Alignment and Word-Level Timestamps

Forced alignment provides precise word-level timestamps that are essential for high-quality subtitles, video synchronization, and downstream language processing. In this stage, a dedicated acoustic model takes the audio signal and an initial transcript from the ASR system and aligns them at a much finer granularity than the raw ASR output. Instead of only knowing when each sentence or segment starts and ends, the system estimates the exact onset and offset of every word, sometimes even at the phoneme level. This alignment process typically uses probabilistic sequence models, such as hidden Markov models or neural acoustic encoders, to compute the most likely timing of each word given the acoustic evidence and the transcript. The result is a rich structure where each word is annotated with start time, end time, and confidence scores, and segments are augmented with detailed timing metadata. These precise timestamps enable frame-accurate subtitle rendering, allow editors to jump directly to specific parts of speech in a video timeline, and create a reliable temporal backbone for later tasks such as machine translation, dubbing, lip-sync analysis, or content-based search across large media libraries.

Speaker Diarization Implementation

Speaker diarization is responsible for answering the question "who spoke when" throughout an audio or video file, turning a raw transcript into a structured, speaker-aware conversation. The system first divides the audio into homogeneous segments that are likely to contain a single dominant speaker and then converts each segment into a compact numerical representation called a speaker embedding, which captures characteristics such as timbre, pitch patterns, and speaking style. Using these embeddings, clustering algorithms group segments that sound similar into consistent speaker identities, without requiring prior knowledge of how many speakers are present or who they are. Advanced diarization pipelines can adaptively estimate the number of speakers, refine boundaries where speakers overlap, and smooth labels so that a single person is assigned the same speaker ID across long recordings. The resulting diarized transcript associates each segment, and often each word, with a stable speaker label, enabling applications like meeting minutes with per-speaker summaries, call center analytics, personalized content recommendations, and clearer subtitles that indicate which character or participant is speaking at each moment.

Post-Processing and Quality Assurance

The post-processing and quality assurance stage transforms raw model outputs into a polished, production-ready transcript that is suitable for end users and downstream systems. Post-processing typically starts with normalization steps such as fixing casing, expanding or standardizing numbers, handling acronyms, and restoring punctuation so that the text reads like natural prose rather than a flat sequence of tokens. Timestamps are then formatted into standardized subtitle timecodes and the transcript is segmented into readable subtitle units or paragraphs according to length, duration, and linguistic boundaries. Quality assurance adds a validation layer on top of this cleaned transcript: it aggregates word-level confidence scores into segment-level metrics, detects unusually low-confidence passages, abrupt timing gaps, or suspicious repetitions, and flags them for human review or automatic reprocessing. Additional checks can enforce style guides, remove disfluencies or filler words when appropriate, and ensure that speaker labels remain consistent throughout the file. Together, these post-processing and QA steps ensure that the final output not only reflects what was said, but also meets the accuracy, readability, and formatting standards required for professional transcription, localization, and accessibility workflows.

Technical Challenges and Solutions

Overlapping Speech: Multiple speakers talking simultaneously creates ASR challenges. Solutions include audio source separation, multi-speaker models, and sophisticated diarization algorithms.

Multilingual Switching: Code-switching between languages requires models that can handle multiple languages simultaneously. Advanced systems use language identification and specialized multilingual models.

Noisy Environments: Background music, ambient noise, and poor audio quality reduce transcription accuracy. Audio preprocessing and source separation techniques mitigate these issues.

Latency vs. Accuracy: Real-time applications require low latency but may sacrifice accuracy. Production systems must balance these competing requirements based on use case.

Production System Design

Batch vs Streaming Processing: Batch processing optimizes for cost and throughput, while streaming processing enables real-time transcription for live applications.

GPU Inference Optimization: Model quantization, batch processing, and tensor parallelization reduce computational costs while maintaining accuracy.

Pipeline Orchestration: Message queues (like Azure Service Bus) coordinate between processing stages, enabling scalable, fault-tolerant workflows.

Cost Optimization Strategies: Dynamic batching, spot instances, and model compression reduce infrastructure costs while maintaining service level agreements.

Technical Architecture Comparison

| Component | Basic ASR | WhisperX | Enterprise Pipeline |

|---|---|---|---|

| Audio Processing | Single channel | Basic filtering | Conv-TasNet separation |

| Speech Recognition | HMM/GMM | Transformer | WhisperX + fine-tuning |

| Speaker Diarization | None | Basic clustering | Advanced clustering + voice profiling |

| Forced Alignment | None | Basic alignment | Word-level precision alignment |

| Quality Assurance | Manual | Confidence scoring | Automated + human review |

| Scalability | Limited | Medium | Enterprise scale |

| Accuracy | 70-85% | 90-95% | 95-98% |

Technical Trade-offs:

- Latency vs. Accuracy: Larger models provide better accuracy but increase processing time

- Computational Cost: GPU acceleration is essential for production-scale processing

- Model Size: Quantization reduces memory usage but may impact edge-case accuracy

- Language Support: Multilingual models require more storage and computational resources

Curify's Production Transcription Architecture

Curify's transcription system represents a production-grade implementation of state-of-the-art audio processing technologies, engineered for scale, accuracy, and reliability. Our architecture combines multiple specialized neural networks into a unified pipeline that processes video content end-to-end with minimal human intervention.

Core Technical Components:

Audio Processing Stack: Utilizing Conv-TasNet for source separation and WhisperX for transcription, Curify achieves 95%+ accuracy even in noisy environments. The system processes audio at 16kHz resolution, applying real-time noise reduction and speaker diarization to isolate individual voices.

Quality Assurance Pipeline: Automated confidence scoring identifies potential transcription errors for human review. The system uses language models trained on domain-specific terminology to improve accuracy for technical content, with fine-tuning capabilities for industry-specific vocabulary.

Infrastructure: Deployed on GPU clusters with distributed processing, handling 100+ concurrent transcription jobs. The system processes 1 hour of video in approximately 2 minutes, depending on content complexity and audio quality.

Pipeline Orchestration: Azure Service Bus queues coordinate between processing stages, enabling fault-tolerant workflows that can handle failures and retries automatically. The system supports both batch processing for cost efficiency and streaming for real-time applications.

🎯 Ready to implement advanced transcription pipelines for your organization? Explore Curify's Technical Transcription Solutions

The Future of Video Transcription Technology

Video transcription technology has evolved from basic speech recognition systems into sophisticated multi-stage pipelines that combine advances in neural networks, audio processing, and optimization techniques. Modern systems like Curify's pipeline demonstrate how Conv-TasNet for audio separation, WhisperX for transcription, and advanced quality assurance can be integrated into production-grade workflows.

For technical teams and developers, the key takeaway is that video transcription is no longer a research problem but a solved engineering challenge. The remaining opportunities lie in optimization, edge cases, and integration rather than fundamental technology limitations. As these systems continue to improve through better model architectures and larger training datasets, we're approaching a future where perfect transcription is available at scale for any content type.

The technical architecture described here represents the current state of the art in 2026, but the field continues to evolve rapidly. Real-time transcription, zero-shot speaker identification, and automated content summarization are already emerging capabilities that will further transform how we approach video content processing and analysis.

Related Articles

DS & AI Engineering

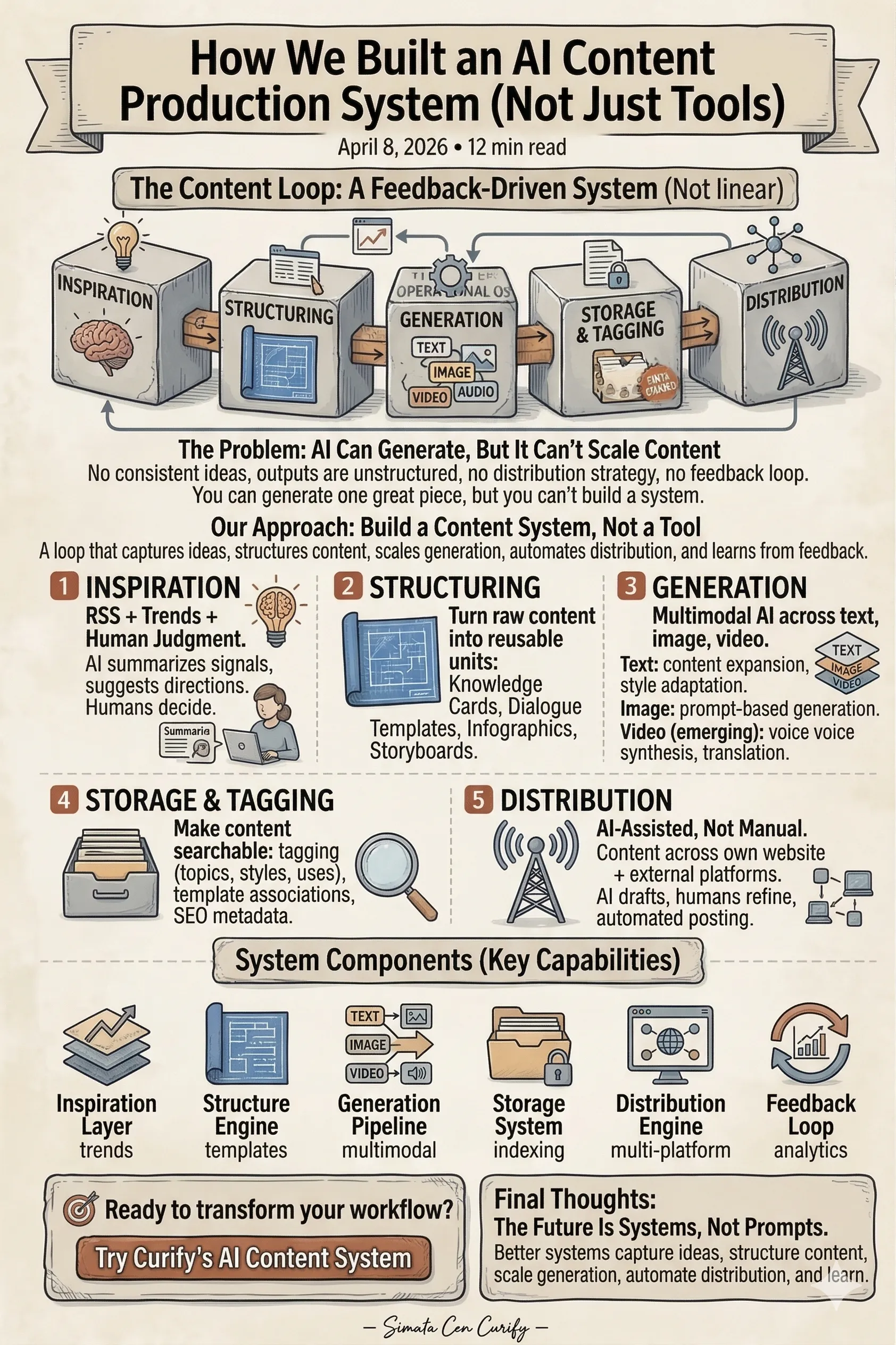

How We Built an AI Content Production System (Not Just Tools)

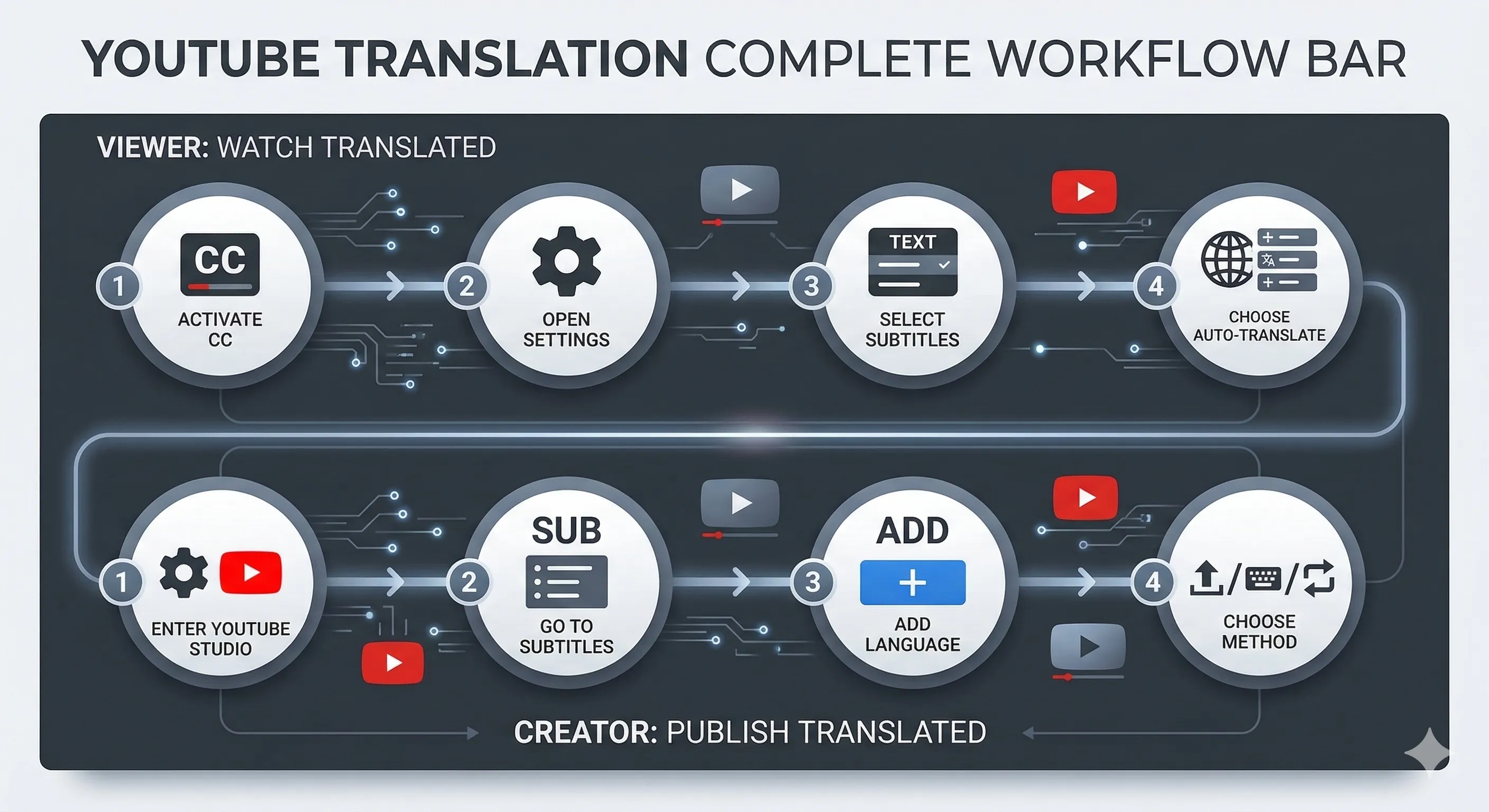

Inside Curify's Video Translation Pipeline: A Technical Deep-Dive