F5-TTS AI Voice Review: Does It Actually Beat ElevenLabs?

Explore the leading AI voice cloning tools of 2026, from open‑source frameworks like F5‑TTS to commercial platforms such as ElevenLabs and Curify. Compare accuracy, realism, cost, and compliance to identify the best fit for your dubbing, media localization, or enterprise voice pipeline.

F5-TTS voice cloning for multilingual dubbing at scale: the hybrid pro workflow, benchmarks, and compliance

Short-form and education teams are localizing more content than ever—without the luxury of linear headcount growth. If you're running weekly drops on YouTube, TikTok, or a course platform, you need cloned voices that sound consistent across languages, predictable costs, and a distribution strategy you can actually operate. This guide shows how to use F5-TTS voice cloning inside a hybrid production stack: F5-TTS for cloning/customization; commercial TTS for distribution at scale. You'll get a reproducible benchmarking playbook (WER, MOS-like, latency/RTF, cost/min), a blueprint for audio A/B listening galleries, and a compliance toolkit you can hand to legal.

How cross-lingual F5-TTS works (and where it struggles)

F5-TTS is a non‑autoregressive, flow‑matching text-to-speech model that couples a Diffusion Transformer (DiT) with conditioning from a short voice reference. The result: fast synthesis and convincing zero‑shot cloning, including cross‑lingual transfer when the reference voice is in one language and the target script is in another. For architecture and training details, see the maintainers' repository in the official SWivid/F5‑TTS GitHub and the ICLR‑submitted paper on OpenReview. The repo documents examples, community finetunes, and evaluation scripts, while the paper explains why flow‑matching supports stable, low‑latency generation.

- According to the maintainers' documentation in the official SWivid/F5‑TTS GitHub repository (accessed Mar 2026), you'll find working inference code, multilingual examples, and pointers to community models.

- The model's design and empirical behavior are detailed in the OpenReview F5‑TTS paper (2025), which emphasizes speed, zero‑shot cloning, and multilingual viability.

- Expressive extremes: laughter, shouting, and whispering can lose nuance.

- Edge phonemes: rare phonemes and mixed‑script code‑switching sometimes soften or misplace stress.

- Prosody drift in long clips: without chunking, rhythm may wander on monologues >30–45 seconds.

The hybrid TTS stack for production

Think of your stack in two halves. Left side: creative control and customization (clone, adapt, iterate) using F5‑TTS voice cloning, with your prompts, references, and model settings. Right side: distribution at scale, where a commercial TTS platform provides SLAs, quotas, and failover. You can swap which half synthesizes the final audio per title, per locale, or even per scene, guided by a decision matrix.

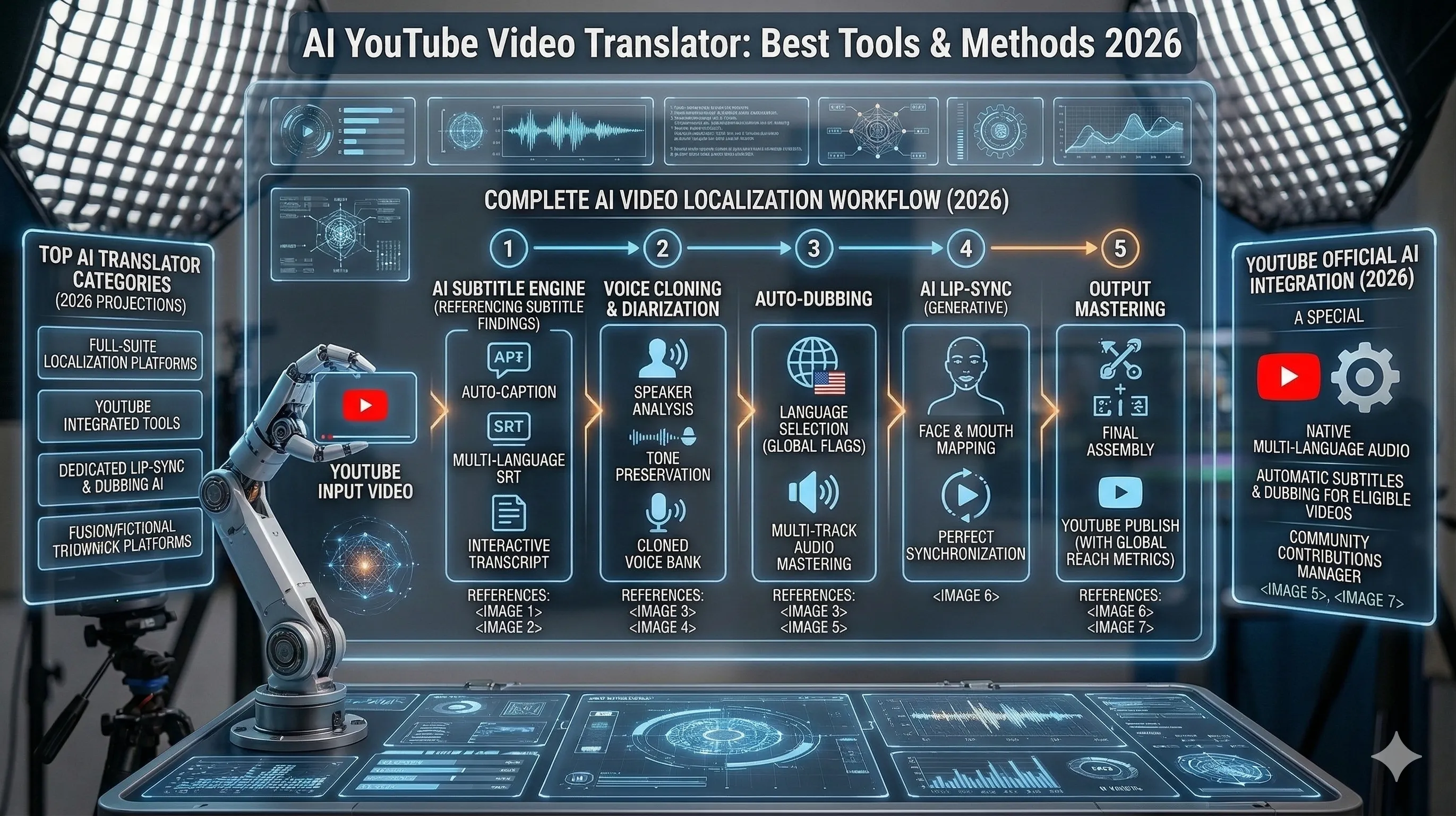

Stages (high level): capture reference → script prep (glossary, timings) → F5‑TTS cloning/customization → QC → subtitles & lip‑sync alignment → distribution to platforms → analytics and iteration.

Decision matrix (use this to choose engine per locale/title):

Use both: prototype and perfect the voice with F5‑TTS, then either (a) ship those exact renders, or (b) match style and distribute via commercial TTS when you need ironclad uptime and quotas.

Reproducible benchmarking: WER, MOS‑like, latency/RTF, and cost per minute

You don't have to trust marketing. Measure it. Here's a repeatable protocol you can drop into your CI.

1. Intelligibility via WER

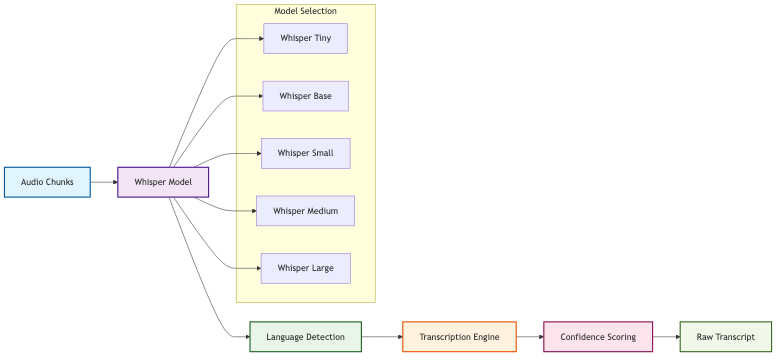

- Transcribe model outputs with Whisper large‑v3 at deterministic settings (temperature=0; beam search) and compute WER/CER against normalized references. For background on the evaluation pattern, see the seed‑tts‑eval methodology by ByteDance (2025) and community discussions on Whisper large‑v3 settings.

- Score each utterance at 16 kHz using the official UTMOS repository (VoiceMOS 2022); report system-level mean with a 95% CI. Note in your report that objective MOS correlates better system‑level than per-file.

- Define RTF = synthesis_time / audio_duration. Log cold-start separately; then report steady-state averages across ≥200 runs. Record GPU (e.g., L20/A100), precision (FP16/BF16), steps (NFE), concurrency, and streaming vs batch.

- Self-hosted: derive $/min from GPU $/hour and measured RTF at target concurrency. Vendor APIs: use official pricing pages and convert per-character fees into $/min with a chars/word assumption.

- Amazon lists per-million-character rates on the AWS Polly pricing page (2026).

- ElevenLabs publishes API rates on the ElevenLabs API pricing page (2026).

- For additional context, consult the Google Cloud Text‑to‑Speech pricing index and capture exact figures at measurement time.

Build your audio A/B gallery the right way

A credible listening gallery helps stakeholders hear trade‑offs at a glance.

- Reference capture: record 10–20 seconds of clean speech from your voice owner per locale target; 48 kHz WAV; room‑tone padded. Log consent artifacts alongside the files.

- Triplets per script: for each test script in each locale, render three files—Reference (human), F5-TTS zero‑shot, and Commercial TTS. Match loudness (−16 LUFS for platforms) before publishing.

- Hosting and naming: store lossless masters and publish 192 kbps AAC previews. Use a consistent scheme like en_es_lesson1_ref.wav, en_es_lesson1_f5.wav, en_es_lesson1_com.wav.

- Listening notes: keep comments specific—plosives (p, b), sibilants (s, sh), breath/noise floor, and prosody alignment. Flag timing mismatches that will affect lip‑sync.

Run F5-TTS Yourself: Install, License, Quickstart

F5-TTS is open source — if you want to run it locally instead of paying per generation, the GitHub repo (SWivid/F5-TTS) has install, examples, and inference scripts.

License: MIT, which permits commercial use without per-call licensing fees. Check the current repo state before production deploys — license terms occasionally evolve between major versions.

Install path: clone the repo, install dependencies (PyTorch plus a few audio libraries), and the CLI entry points cover both standard inference and voice cloning. A CUDA-capable GPU is strongly recommended — inference on CPU is roughly an order of magnitude slower, fine for prototyping, painful at production scale.

Voice cloning quickstart: zero-shot cloning needs only a 5-15 second reference audio clip in the source language. Pass the reference WAV plus the target text to the inference CLI; the model produces a 24kHz WAV in the cloned voice. First-pass quality is production-acceptable for narration and explainer content. For emotional or character delivery, iterate on reference-clip selection or fall back to a hosted API with broader emotional range.

Self-host vs hosted API — when to pick which:

- *Self-host F5-TTS*: high-volume production where per-generation cost matters, strict data-residency requirements, or custom fine-tuning needs.

- *Hosted API (ElevenLabs, Curify, others)*: low or sporadic volume, no GPU infrastructure, or you need emotional-range options that exceed the open-source baseline.

For the architecture details — the non-autoregressive flow-matching plus diffusion transformer backbone — the original F5-TTS paper linked from the GitHub repo is the canonical reference.

Conclusion

Here's the deal: treat F5‑TTS as your creative lab for precise voice identity and cross‑lingual control, then lean on a commercial TTS when distribution SLAs, quotas, and burst capacity matter most. Measure everything—WER, MOS‑like, RTF, and dollars per minute—so you can defend trade‑offs title by title and locale by locale. Do that, and multilingual dubbing at scale stops feeling like a gamble and starts running like an operation.

Take the next step

Putting what you read into practice.