Emotion TTS Movie: Make Your Narratives Sound More Emotional

Transform Flat Narratives into Emotional Masterpieces

What if your video narration could convey not just information, but genuine emotion? Our emotion-enhanced TTS tool takes existing video content and supercharges it with high-energy, emotionally expressive voice synthesis. Using Azure Cognitive Services' advanced SSML markup and ElevenLabs transcription, this tool transforms flat, monotonous narration into compelling, emotionally resonant performances that captivate audiences.

What This Emotion Enhancement Tool Does

This Python tool represents a breakthrough in audio post-production - it extracts audio from existing videos, transcribes it with precision, then re-synthesizes each segment with emotional intelligence. The result is a new audio track that maintains perfect lip-sync while adding dramatic expression, energy, and emotional nuance that was impossible with traditional TTS systems.

🎭 Core Capabilities

How the Emotion Pipeline Works

The tool follows a sophisticated six-step process that transforms flat narration into emotionally engaging performances while maintaining perfect technical synchronization.

📥Audio Extraction

Extract high-quality audio from existing MP4 video using MoviePy, preserving original timing and quality.

Audio Extraction Process

Uses MoviePy to extract PCM audio with proper codec settings for maximum compatibility.

clip = VideoFileClip(video_path) clip.audio.write_audiofile(audio_path, codec='pcm_s16le', logger=None)

📝Intelligent Transcription

ElevenLabs Scribe provides word-level timestamps and punctuation detection for precise segmentation.

Transcription API

Direct API integration with word-level timing and automatic punctuation detection.

resp = requests.post(ELEVENLABS_URL, headers={'xi-api-key': ELEVENLABS_KEY}, files={'file': ('audio.wav', f, 'audio/wav')}, data={'model_id': 'scribe_v1'})🎭Emotional SSML Building

Convert text segments into SSML with expressive markup for high-energy delivery styles.

SSML Generation

Builds SSML with advertisement_upbeat style, rate/pitch/volume controls for emotional expression.

def build_emotional_ssml(text: str) -> str:

return f'''<speak version='1.0' xmlns='http://www.w3.org/2001/10/synthesis' xmlns:mstts='https://www.w3.org/2001/mstts' xml:lang='en-US'>

<voice name='{voice}'>

<mstts:express-as style='advertisement_upbeat' styledegree='2'>

<prosody rate='+15%' pitch='+8%' volume='+15%'>

{escaped}

</prosody>

</mstts:express-as>

</voice>

</speak>'''🔊Azure TTS Synthesis

Azure Cognitive Services generates high-quality emotional audio with natural prosody and expression.

Azure TTS API

Uses Azure's neural TTS with SSML support for expressive speech synthesis.

headers = {'Ocp-Apim-Subscription-Key': AZURE_API_KEY, 'Content-Type': 'application/ssml+xml', 'X-Microsoft-OutputFormat': 'riff-24khz-16bit-mono-pcm'}

resp = requests.post(AZURE_TTS_URL, headers=headers, data=ssml.encode('utf-8'), timeout=30)🔗Audio Concatenation

Combine individual emotional segments into a single continuous audio track.

WAV Concatenation

Preserves audio parameters while concatenating multiple WAV files into final track.

def concat_wavs(wav_paths: list[str], out_path: str) -> None:

params = None

frames = []

for p in wav_paths:

if not os.path.exists(p):

continue

with wave.open(p, 'rb') as wf:

if params is None:

params = wf.getparams()

frames.append(wf.readframes(wf.getnframes()))

if not frames:

logger.warning('No WAV frames to concatenate.')

return

with wave.open(out_path, 'wb') as out_wf:

out_wf.setparams(params)

for f in frames:

out_wf.writeframes(f)🎬Video Muxing

Replace original audio with emotional track while preserving video quality.

FFmpeg Integration

Uses FFmpeg for professional video/audio muxing with automatic duration matching.

cmd = ['ffmpeg', '-y', '-i', video_path, '-i', audio_path, '-map', '0:v:0', '-map', '1:a:0', '-c:v', 'copy', '-c:a', 'aac', '-b:a', '192k', '-shortest', out_path]

The Science of Emotional Speech

Traditional TTS systems produce flat, monotonous speech that fails to engage audiences. Our emotion enhancement uses cutting-edge SSML markup and Azure's neural TTS to create performances with natural emotional variation, dynamic range, and expressive delivery that matches professional voice acting.

🎯 SSML Markup for Expression

Advertisement Upbeat Style

<speak version='1.0' xmlns='http://www.w3.org/2001/10/synthesis' xmlns:mstts='https://www.w3.org/2001/mstts' xml:lang='en-US'>

<voice name='en-US-AndrewNeural'>

<mstts:express-as style='advertisement_upbeat' styledegree='2'>

<prosody rate='+15%' pitch='+8%' volume='+15%'>

Your emotional text here

</prosody>

</mstts:express-as>

</voice>

</speak>- •styledegree: Controls intensity level (0-2, higher = more expressive)

- •rate: Speech speed adjustment (-100% to +100%)

- •pitch: Pitch modification for emotional emphasis (-50% to +50%)

- •volume: Loudness control for impact (0% to +100%)

🔊 Andrew Neural - High-Energy Voice

- •Naturally expressive tone perfect for advertisements and excitement

- •Supports advertisement_upbeat style for maximum energy

- •Built-in prosody controls for fine-tuned emotional delivery

- •Optimized for engaging, high-impact content

Technical Architecture

🧠 AI Components

- •Azure Cognitive Services TTS with SSML support

- •ElevenLabs Scribe for word-level transcription

- •Intelligent text segmentation with boundary detection

- •Emotional markup generation with style controls

- •Professional audio processing and concatenation

⚙️ Processing Pipeline

- •MoviePy audio extraction with codec optimization

- •Real-time transcription with word-level timestamps

- •SSML building with expressive prosody controls

- •Azure TTS synthesis with neural voice models

- •WAV concatenation preserving audio parameters

- •FFmpeg video/audio muxing with automatic duration matching

Real-World Applications

🎬 Film & Video Production

Transform documentary narration from flat delivery to emotionally engaging performances.

- • Documentary voice-over enhancement for dramatic impact

- • Educational content with engaging emotional delivery

- • Marketing videos with high-energy persuasive narration

📚 Educational Content

Create engaging learning materials with expressive, emotionally resonant narration.

- • Online course videos with dynamic emotional emphasis

- • Children's educational content with expressive storytelling

- • Corporate training videos with engaging emotional variation

🎮 Gaming & Interactive Media

Add emotional depth to game narration and character voices.

- • Character voice acting with emotional range and expression

- • Interactive story narration with dynamic emotional delivery

- • Game tutorial videos with engaging emotional emphasis

🎭 Digital Storytelling

Create audiobooks and stories with professional emotional performances.

- • Audiobook production with character emotional expression

- • Podcast enhancement with engaging emotional delivery

- • Digital storytelling with dynamic emotional variation

Core Implementation Example

Here's the essential code structure that powers the emotion enhancement:

def main():

if not AZURE_API_KEY:

logger.error('AZURE_AI_API_KEY not set. Check curify_background/.env')

sys.exit(1)

# Step 1: Extract audio

if not os.path.exists(AUDIO_PATH):

if not extract_audio(VIDEO_PATH, AUDIO_PATH):

sys.exit(1)

# Step 2: Transcribe

segments = transcribe(AUDIO_PATH)

# Step 3: TTS per segment

wav_paths: list[str] = []

for i, seg in enumerate(segments):

text = seg['text'].strip()

if not text:

continue

out_path = os.path.join(OUTPUT_DIR, f'segment_{i:03d}.wav')

if os.path.exists(out_path):

logger.info('[%02d] Segment WAV already exists, skipping TTS.', i)

wav_paths.append(out_path)

continue

ssml = build_emotional_ssml(text)

logger.info('[%02d] Generating TTS: %s…', i, text[:60])

if azure_tts(ssml, out_path):

wav_paths.append(out_path)

# Step 4: Concatenate

if not wav_paths:

logger.error('No segments synthesised.')

sys.exit(1)

concat_wavs(wav_paths, FULL_WAV)

# Step 5: Mux onto original video

if not mux_audio_video(VIDEO_PATH, FULL_WAV, OUTPUT_MP4):

sys.exit(1)

logger.info('All done!')Why Emotional Enhancement Works

Key Benefits

- ✓Perfect lip-sync with original video timing

- ✓Natural emotional expression and variation

- ✓High-quality neural TTS synthesis

- ✓Intelligent text segmentation and boundary detection

- ✓Professional audio processing pipeline

- ✓Batch processing with consistent emotional delivery

Getting Started

Quick Start Guide

⚠️ System Requirements

- •Azure AI API key with Cognitive Services access

- •ElevenLabs API key for transcription services

- •Python 3.7+ with MoviePy and requests libraries

- •FFmpeg installed and available in PATH

- •Existing MP4 video for audio extraction

- •Sufficient storage for intermediate audio files

Expected Results

The tool produces emotionally enhanced videos that maintain perfect technical quality while adding dramatic expressiveness.

🎭 Emotional Audio Output

High-energy expressive audio with natural prosody and emotional variation

Azure neural TTS, SSML markup, 24kHz/16bit PCM WAV format

🎬 Technical Specifications

Production-ready pipeline with Azure TTS integration and ElevenLabs transcription

Python 3.9+, Azure Cognitive Services API, MoviePy for video processing

Future Developments

We're continuously improving our emotion enhancement technology with new features and capabilities.

Upcoming Features

Related Articles

Creator Tools

Mini-Tool: Turn Images into Narrative Videos

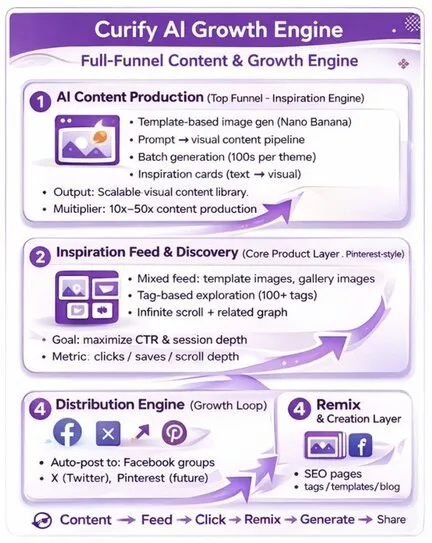

The Curify AI Growth Engine: Transforming Content Creation for UGC Creators and Marketers